We call this variant no-hadoop Spark distribution. The other one is pre-built with user-provided Hadoop since this Spark distributionĭoesn’t contain a built-in Hadoop runtime, it’s smaller, but users have to provide a Hadoop installation separately. Version of Apache Hadoop this Spark distribution contains built-in Hadoop runtime, so we call it with-hadoop Sparkĭistribution. There are two variants of Spark binary distributions you can download. Running Spark on YARN requires a binary distribution of Spark which is built with YARN support.īinary distributions can be downloaded from the downloads page of the project website. jars my-other-jar.jar,my-other-other-jar.jar \ To launch a Spark application in cluster mode: Parameter, in YARN mode the ResourceManager’s address is picked up from the Hadoop configuration.

Unlike other cluster managers supported by Spark in which the master’s address is specified in the -master

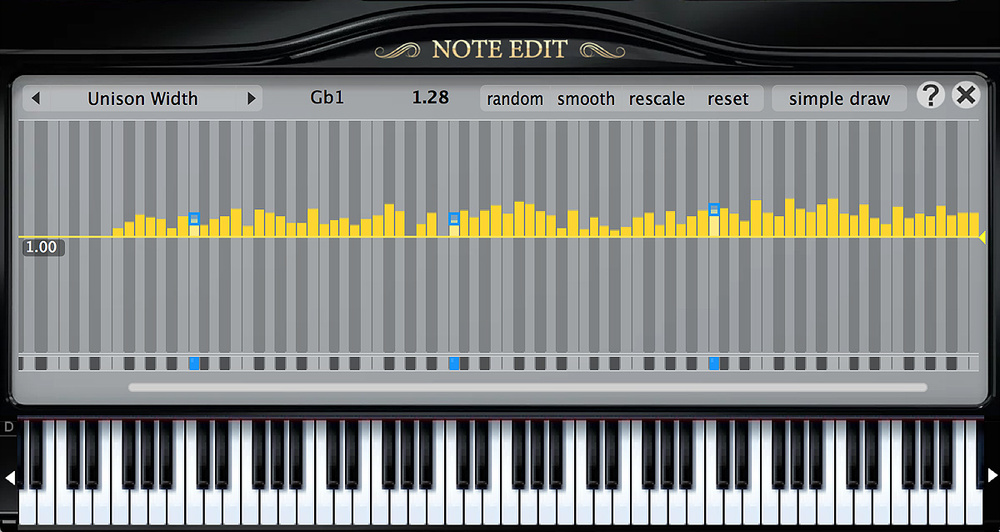

What system rescources are needed to run pianoteq 6 stage driver#

In client mode, the driver runs in the client process, and the application master is only used for requesting resources from YARN. In cluster mode, the Spark driver runs inside an application master process which is managed by YARN on the cluster, and the client can go away after initiating the application. There are two deploy modes that can be used to launch Spark applications on YARN. Spark application’s configuration (driver, executors, and the AM when running in client mode). Java system properties or environment variables not managed by YARN, they should also be set in the TheĬonfiguration contained in this directory will be distributed to the YARN cluster so that allĬontainers used by the application use the same configuration. These configs are used to write to HDFS and connect to the YARN ResourceManager. Launching Spark on YARNĮnsure that HADOOP_CONF_DIR or YARN_CONF_DIR points to the directory which contains the (client side) configuration files for the Hadoop cluster. Please see Spark Security and the specific security sections in this doc before running Spark. Or an untrusted network, it’s important to secure access to the cluster to prevent unauthorized applications When deploying a cluster that is open to the internet Security features like authentication are not enabled by default. Was added to Spark in version 0.6.0, and improved in subsequent releases. Running multiple versions of the Spark Shuffle Service.Using the Spark History Server to replace the Spark Web UI.Launching your application with Apache Oozie.Configuring the External Shuffle Service.Resource Allocation and Configuration Overview.Available patterns for SHS custom executor log URL.